80GB HBM3 memory with ultra-high 3TB/s bandwidth

Supports FP8 for faster AI model training and inference

Up to 6X performance boost over A100 in AI workloads

Transformer Engine optimized for LLMs and deep learning

PCIe Gen5 and NVLink support for ultra-fast data transfer

Advanced security and Multi-Instance GPU (MIG) capability

Ideal for data centers, scientific computing, and generative AI

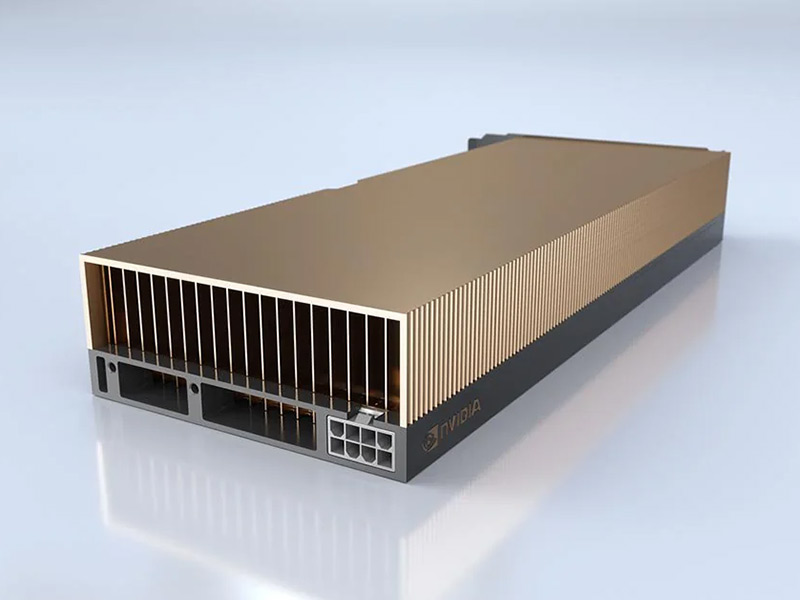

The NVIDIA H100 Tensor Core GPU, built on the Hopper architecture, represents a significant advancement in accelerated computing, offering unparalleled performance for AI, HPC, and data analytics workloads.

At the core of the NVIDIA H100 lies the revolutionary Hopper architecture—a purpose-built design for handling the immense computational demands of AI and high-performance computing (HPC) in the modern era. Hopper builds upon and vastly enhances the capabilities introduced by the previous Ampere architecture, with a renewed emphasis on AI model efficiency, transformer optimization, and energy-efficient throughput.

The H100 is manufactured using TSMC’s advanced 4N process node, which enables the integration of approximately 80 billion transistors within an 814 mm² die, resulting in remarkable silicon density. This allows for an unprecedented level of parallelism, precision, and raw computational power.

The SXM5 variant of the H100 includes up to 132 SMs, while the PCIe variant houses 114 SMs. These SMs are the fundamental building blocks of the GPU and contain:

The upgraded Tensor Cores provide performance boosts across multiple data types including FP64, FP32, TF32, BF16, FP16, FP8, and INT8. Each core is optimized for mixed-precision workloads, essential for training deep learning models faster while preserving accuracy.

A standout feature in the Hopper architecture is the Transformer Engine, specifically developed to accelerate the training and inference of transformer-based models—such as GPT, BERT, and other LLMs. This engine dynamically switches between FP8 and FP16 precision depending on layer sensitivity, which maximizes throughput without compromising model fidelity.

The H100 is equipped with a generous 50 MB of L2 cache, significantly reducing latency and improving access speed to frequently used data. It supports 80 GB of HBM3 (SXM5) or HBM2e (PCIe) high-bandwidth memory, capable of reaching up to 3.35 TB/s bandwidth in the SXM5 configuration. This makes it ideally suited for memory-bound workloads such as large-scale simulations or LLMs.

To enable high-throughput multi-GPU configurations, Hopper architecture integrates advanced interconnects:

Building upon the first-generation MIG introduced in A100, the H100 allows a single GPU to be partitioned into up to 7 isolated GPU instances, each with dedicated SMs, memory, and cache resources. This is a game-changer for multi-tenant and cloud environments, where efficiency and security are paramount.

The Hopper architecture also introduces the industry’s first confidential computing capability in a GPU. Using dedicated hardware-level features, the H100 enables data to remain encrypted and secure even during computation. This is essential for industries like healthcare, finance, and defense where data sensitivity is critical.

In summary, the NVIDIA H100’s Hopper architecture represents a monumental shift in GPU design. Its fusion of computational horsepower, intelligent resource management, and built-in security creates a platform not just for today’s workloads, but for future challenges in AI, HPC, and cloud infrastructure.

The H100 excels at training state-of-the-art transformer-based models used in natural language processing. Its fourth-generation Tensor Cores support FP8 precision, enabling higher throughput and faster training times. Whether it’s OpenAI’s GPT, Google’s PaLM, Meta’s LLaMA, or DeepMind’s Chinchilla, the H100 dramatically reduces the time and energy required to train models with hundreds of billions of parameters.

From molecular dynamics to computational fluid dynamics (CFD) and seismic analysis, the H100 is built to accelerate floating-point intensive workloads. Its double-precision (FP64) performance and memory bandwidth up to 3.35 TB/s make it perfect for physics simulations and scientific computing.

Inference tasks such as real-time image classification, voice recognition, and recommendation systems benefit greatly from H100’s transformer engine and lower latency. The GPU’s support for FP8, FP16, and INT8 allows optimized performance for both small and large-scale inference scenarios.

H100 dramatically speeds up large-scale analytics, including ETL pipelines, graph analytics, and big data workloads using platforms like RAPIDS and Spark. It allows companies to process petabytes of data faster and with greater accuracy.

Thanks to Multi-Instance GPU (MIG) capability, a single H100 can be partitioned into up to 7 isolated GPU instances. Cloud service providers use MIG to maximize GPU utilization while providing guaranteed quality of service to tenants.

With built-in secure enclaves and confidential computing capabilities, H100 ensures data integrity during training and inference of sensitive models. It’s ideal for applications in finance, healthcare, and defense where privacy and compliance are paramount.

H100’s real-time AI performance supports decision-making systems in autonomous vehicles, drones, and robotics. Its compute power enables rapid environment sensing, path planning, and object detection models to operate efficiently.

At the heart of NVIDIA H100 is the new Hopper architecture, featuring fourth-generation Tensor Cores and FP8 support. This combination delivers up to 30x performance improvement over previous GPUs like the A100 for large-scale AI models, particularly in training transformer-based networks.

Thanks to advanced interconnects like NVLink 4.0 and PCIe Gen5, H100 can be deployed in modular or distributed environments. The Multi-Instance GPU (MIG) feature allows partitioning of the GPU into multiple isolated instances, supporting various users and workloads simultaneously.

Hopper architecture optimizes power usage with a higher performance-per-watt ratio. Despite its extreme computational ability, H100 is more energy-efficient than its predecessors, making it suitable for data centers with sustainability goals.

With cutting-edge support for PCIe Gen5, NVLink 4, and HBM3 memory, the H100 is built to accommodate future workloads. It also supports confidential computing, secure boot, and encryption features for upcoming security standards.

The NVIDIA H100 is tightly integrated with the full NVIDIA software stack: CUDA 12, cuDNN, TensorRT, NVIDIA AI Enterprise, and NCCL, enabling developers to use state-of-the-art frameworks out of the box.

With built-in Confidential Computing capabilities, the H100 ensures data remains secure during both training and inference. This makes it suitable for regulated industries such as healthcare, finance, and government.

NVIDIA H100 is designed to handle a wide spectrum of AI and HPC applications—ranging from LLMs to seismic modeling, genomic research, and real-time analytics. Its ability to switch between precision formats (FP64, FP32, TF32, FP16, FP8, INT8) enables custom optimization per workload.

The NVIDIA H100 is designed to work seamlessly with the following systems:

NVIDIA H100 also integrates with NVIDIA software stacks such as CUDA 12, cuDNN, TensorRT, NCCL, and the NVIDIA AI Enterprise Suite, ensuring plug-and-play experience for AI developers.

The NVIDIA H100 Tensor Core GPU represents the pinnacle of modern GPU engineering. It combines raw power with intelligent architecture to drive the most demanding AI, HPC, and analytics workloads. Whether you’re building the next breakthrough in language models, simulating complex systems, or deploying secure, multi-tenant infrastructure in the cloud—H100 provides the foundation for innovation.

With support for industry-leading software ecosystems and cutting-edge hardware features, the H100 is more than just a GPU—it’s a platform for the future of accelerated computing.

Unmatched AI and HPC Performance with Hopper Architecture

Powered by the breakthrough NVIDIA Hopper™ architecture, the H100 delivers extraordinary acceleration for the most demanding AI training, inference, and high-performance computing (HPC) workloads — redefining what’s possible in data centers and supercomputing environments.

Transformative Tensor Core Technology (4th Generation)

Featuring 4th-generation Tensor Cores with FP8 precision support, the H100 achieves unprecedented AI throughput and efficiency. It delivers up to 6x higher performance than previous-generation GPUs for transformer-based models and deep learning workloads.

Massive 80 GB HBM3 Memory

With 80 GB of ultra-fast HBM3 memory and over 3 TB/s of memory bandwidth, the H100 is engineered to handle massive datasets and complex model training with ease and speed.

NVLink and PCIe Gen 5 Support

The H100 offers high-bandwidth interconnect technologies, including NVLink® and PCI Express Gen 5.0, enabling fast data sharing between GPUs and CPUs, minimizing bottlenecks in multi-GPU configurations.

Transformer Engine for AI Innovation

The NVIDIA Transformer Engine is specifically designed to accelerate AI models such as GPT, BERT, and other LLMs. It dynamically adjusts numerical precision to maximize performance without compromising accuracy.

Scalable for Enterprise and Cloud AI

Whether deployed in NVIDIA DGX™ systems, on-premise data centers, or public cloud platforms, the H100 scales seamlessly across environments to power AI infrastructure at every level.

Confidential Computing and Enhanced Security

The H100 is the world’s first GPU with confidential computing capabilities, allowing secure data processing through encrypted memory and secure enclaves — ideal for privacy-sensitive industries such as healthcare and finance.

Versatile for AI, HPC, and Data Analytics

From training trillion-parameter foundation models to accelerating simulations and data analytics, the H100 is a versatile powerhouse for next-generation computing.

Discover the countless ways that Q9 technology can solve your network challenges and transform your business – with a free 30-minute discovery call.

At Q9, we have the skills, the experience, and the passion to help you achieve your business goals and transform your organization.

All rights reserved for Q9 technologies.