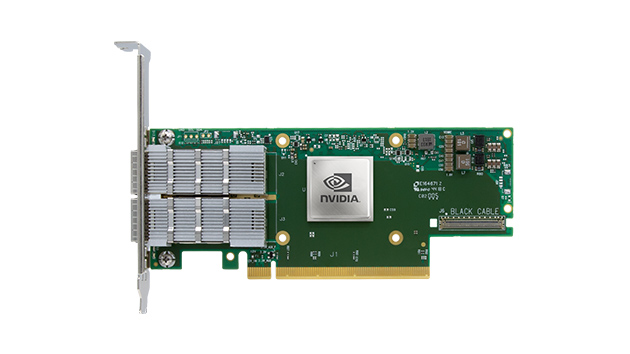

Product: NVIDIA ConnectX-6 InfiniBand Smart Adapters

Port Options: Single or Dual QSFP56 ports

Data Rate: Up to 200Gb/s InfiniBand and Ethernet

PCIe Support: PCIe 3.0 x16 / 4.0 x8 or x16

Technologies:

In-Network Computing Acceleration

Smart Offloads: RDMA, GPUDirect

VPI (Virtual Protocol Interconnect)

Benefits:

Ultra-low latency

High throughput

Scalability for HPC, AI, and cloud workloads

Reduced CPU overhead with smart offloads

Flexible crypto support options

High-Speed, Scalable, and Intelligent Network Connectivity for HPC, AI, and Cloud Workloads

In today’s data-driven world, high-performance computing (HPC), artificial intelligence (AI), and large-scale cloud infrastructures require more than just fast processors.

Efficient networking plays a crucial role in ensuring applications run smoothly, reliably, and at scale. NVIDIA ConnectX-6 InfiniBand Adapters deliver exactly that.

Designed as part of the NVIDIA Quantum InfiniBand platform, ConnectX-6 adapters are engineered to handle the most complex workloads with unprecedented speed, reliability, and flexibility.

These smart network interface cards (NICs) offer up to two ports of 200Gb/s InfiniBand and Ethernet connectivity, combined with NVIDIA’s unique In-Network Computing acceleration technologies.

ConnectX-6 adapters deliver up to 200 gigabits per second (Gb/s) data rate, supporting both InfiniBand HDR and 200GbE Ethernet protocols.

This enables data centers to handle massive workloads such as scientific simulations, large-scale AI model training, and hyperscale storage operations effortlessly.

Depending on infrastructure needs, customers can choose from single or dual port configurations.

Dual port cards are especially beneficial in environments where high availability, failover, or increased bandwidth is required.

ConnectX-6 adapters support both PCIe 4.0 and PCIe 3.0 buses. This ensures compatibility with a wide range of server architectures, while also unlocking the full potential of newer systems that take advantage of PCIe 4.0’s higher bandwidth.

NVIDIA’s In-Network Computing technology allows critical data operations to happen directly within the network, rather than at the server endpoint.

This reduces data movement overhead, decreases latency, and dramatically improves workload efficiency for distributed applications in HPC, AI, and cloud services.

By offloading complex networking tasks from the CPU to the ConnectX-6 adapter, system resources are freed up to handle core application processing.

Remote Direct Memory Access (RDMA) support ensures ultra-low latency data transfers across servers without consuming CPU resources, making it perfect for latency-sensitive applications.

ConnectX-6 is equipped with VPI technology, allowing seamless switching between InfiniBand and Ethernet modes.

This dual-protocol capability provides unmatched flexibility for hybrid network environments.

|

Feature |

Description |

|

Product Name |

NVIDIA ConnectX-6 InfiniBand Adapters |

|

Data Rate |

100GbE / 200GbE |

|

Ports |

1 or 2 (QSFP56) |

|

PCIe Interface |

PCIe 3.0 x16, PCIe 4.0 x8/x16 |

|

Network Interface |

QSFP56 |

|

Technology |

InfiniBand HDR / EDR and 100GbE Ethernet |

|

Offload Capabilities |

RDMA, GPU Direct, SR-IOV, In-Network Computing |

|

Compatibility |

Compatible with all major server vendors supporting PCIe 3.0/4.0 |

|

Security |

Hardware isolation, secure boot, and cryptographic features (varies by SKU) |

|

SKU |

Model |

Ports |

PCIe |

Data Rate |

Crypto |

|

900-9X6AF-0018-MT0 |

MCX653105A-HDAT-SP |

Single |

PCIe 4.0 x16 |

200GbE/HDR |

– |

|

900-9X6AF-0058-ST2 |

MCX653106A-HDAT-SP |

Dual |

PCIe 4.0 x16 |

200GbE/HDR |

– |

|

900-9X6AF-0016-ST2 |

MCX653105A-ECAT-SP |

Single |

PCIe 3.0/4.0 x16 |

HDR100/EDR/100GbE |

– |

|

900-9X6AF-0056-MT0 |

MCX653106A-ECAT-SP |

Dual |

PCIe 3.0/4.0 x16 |

HDR100/EDR/100GbE |

– |

|

900-9X628-0016-ST0 |

MCX651105A-EDAT |

Single |

PCIe 4.0 x8 |

HDR100/EDR/100GbE |

– |

|

900-9X657-0058-SB0 |

MCX653436A-HDAB |

Dual |

PCIe 4.0 x16 |

HDR/200GbE |

– |

✔ Industry-Leading Speed and Efficiency: The 200Gb/s throughput combined with In-Network Computing creates unparalleled network acceleration.

✔ Future-Proof Compatibility: PCIe 4.0 support ensures your infrastructure investment remains relevant for years to come.

✔ Flexibility Across Protocols: With VPI, ConnectX-6 provides InfiniBand and Ethernet in a single adapter, simplifying deployment and scaling.

✔ Reduced TCO: Smart offloads and RDMA reduce server CPU usage, lowering power consumption and operational costs over time.

✔ Proven Reliability: NVIDIA’s engineering quality and global support make ConnectX-6 a trusted choice for mission-critical applications.

The NVIDIA ConnectX-6 InfiniBand Adapters represent a new benchmark in smart networking for HPC, AI, and cloud environments.

Combining blazing-fast speeds, advanced offload capabilities, and versatile configuration options, ConnectX-6 enables organizations to scale their computing power efficiently while maintaining reliability and control.

Whether building a next-generation AI cluster or upgrading an existing HPC system, ConnectX-6 delivers the performance and flexibility modern data centers demand.

Product Name: NVIDIA ConnectX-6 InfiniBand Adapters

Data Rates: 100GbE / 200GbE / HDR100 / EDR

Port Configuration: Single or Dual QSFP56 Ports

PCI Express Interface: PCIe 3.0 x16, PCIe 4.0 x8/x16

Network Interface: QSFP56 Connector

Supported Technologies:

VPI (Virtual Protocol Interconnect)

In-Network Computing Acceleration

Smart Offloads (RDMA, GPUDirect, etc.)

Latency: Ultra-low latency optimized for HPC and AI workloads

Message Rate: High message rate performance

Crypto Capabilities: Available on select models

Compatibility: Supports a wide range of HPC, AI, and cloud server platforms

Dual and Single-Port Configurations: Offering up to two ports supporting 200GbE or 100GbE with QSFP56 interfaces.

High-Speed Connectivity: Delivers ultra-low latency and high throughput up to 200Gb/s, ideal for HPC, AI, and cloud infrastructures.

PCIe Flexibility: Supports both PCIe 3.0 and PCIe 4.0 interfaces, maximizing compatibility with modern server platforms.

In-Network Computing: Enables offloading complex data processing tasks directly onto the network, reducing server load and boosting application efficiency.

Smart Offloads: Supports RDMA, GPUDirect, and other offload engines that enhance scalability and reduce CPU utilization.

Versatile Protocol Support: Compatible with InfiniBand HDR, EDR, and 100/200Gb Ethernet using VPI (Virtual Protocol Interconnect) technology.

Scalable Architecture: Designed for large-scale deployments in data centers, supporting extreme-size datasets and parallel computing.

Crypto Support Options: Select SKUs include cryptographic capabilities for secure data transmission.

Discover the countless ways that Q9 technology can solve your network challenges and transform your business – with a free 30-minute discovery call.

At Q9, we have the skills, the experience, and the passion to help you achieve your business goals and transform your organization.

All rights reserved for Q9 technologies.